Intro

The disposable nature of artificial intelligence infects all current products, making it their greatest flaw. In this article, I will explore why our most advanced tools are suffering from “digital amnesia”, how we can fix it today using open sourced tools (with a demo and code), and why the future of our digital identity depends on the battle between privacy and convenience.

Fragmented Intelligence

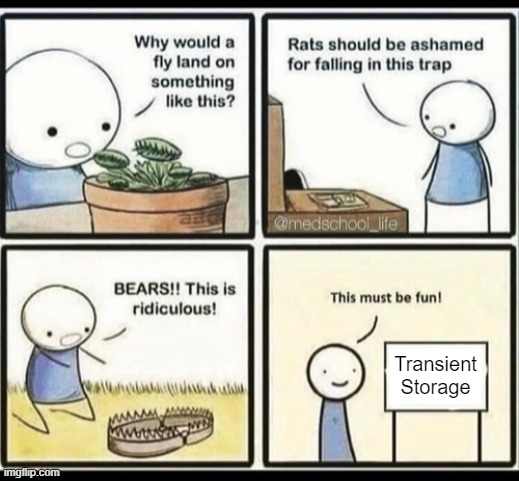

We are currently witnessing a fragmentation of intelligence. Every time you open a new AI tool, you are forced to introduce yourself all over again. You explain your coding style to one, your dietary restrictions to another, and your personal goals to a third. Current AI models are brilliant but “amnesiac”; exist in silos, having every session as a blank state or, at best, trapping your history within a single proprietary walled garden.